Modular Data Centers

Expert Design, Rapid Delivery, Smooth Operation

nanoDC, designs, delivers, & operates innovative modular containerised Data Centers to empower your IT and AI infrastructure.

NanoDc modules can be built to any size, deployed anywhere there is appropriate power and connectivity.

They can be engineered to host CPU or GPU or a mix of both, as single elements, or as tightly meshed local or distributed modules engineered as single tightly synchronised DC.

Consultancy for Modular Data Centers

nanoDC specializes in tailored consultancy services focused on the design, construction, and operation of traditional, modular and containerized data centers, combining innovation, reliability, and scalability to support enterprise IT and advanced AI infrastructures.

Blog

Explore expert articles and resources on modular and containerized data center design, delivering valuable insights to empower your infrastructure decisions.

-

Exploring the Role of Modular Design in Edge Data Centers

As enterprises push computing power closer to end users, edge data centers have become…

-

12 Expert Tips for Efficient Data Center Construction

Data center construction is where meticulous planning meets unforgiving reality. Whether you’re building your…

Experience Tailored Solutions for Data Center Excellence

Discover how nanoDC’s modular designs transform data center deployment with efficiency, scalability, and AI-ready infrastructure.

Modular Design Expertise

Our modular approach ensures rapid deployment, flexibility for evolving needs, and cost-effective scalability tailored to your IT and AI workloads.

AI-Optimized Infrastructure

Specialized consultancy ensures your data center supports demanding AI applications with cutting-edge cooling, power, and network solutions.

Seamless Integration

We guide the integration of containerized data centers into your existing environments, minimizing disruption and maximizing performance.

Our Approach

Discover our tailored consultancy process, designed to guide you through every stage of modular and containerized data center projects.

Step One: Initial Consultation

We assess your current infrastructure needs and outline how modular solutions can optimize your data center performance.

Step Two: Custom Design

Our experts craft bespoke modular layouts tailored for both traditional IT and advanced AI applications, ensuring scalability.

Step Three: Implementation

We oversee the construction and deployment phases to guarantee your data center operates seamlessly and efficiently.

Expert Modular Data Center Design Services

Contact us with ease: find our locations, phone lines, and email addresses here.

USA: 1888 Victoria Landing, San Jose, CA 95132

UK:

info@nanodc.net

Welcome to Our Blog

Stay updated with expert insights, advice, and stories. Discover valuable content to keep you informed, inspired, and engaged with the latest trends and ideas.

-

As enterprises push computing power closer to end users, edge data centers have become critical infrastructure for reducing latency and improving application performance. From autonomous vehicles requiring split-second processing to augmented reality applications demanding real-time responsiveness, the need for distributed computing at the network’s edge has never been more urgent. At the heart of this transformation is modular design—a flexible, scalable approach that’s reshaping how we deploy and manage distributed computing resources.

Perhaps the most profound impact of modular edge design lies not in its technical specifications, but in its power to democratize access to advanced computing infrastructure. For decades, sophisticated data center capabilities remained the exclusive domain of large enterprises with deep pockets and specialized expertise. Modular design changes this equation fundamentally.

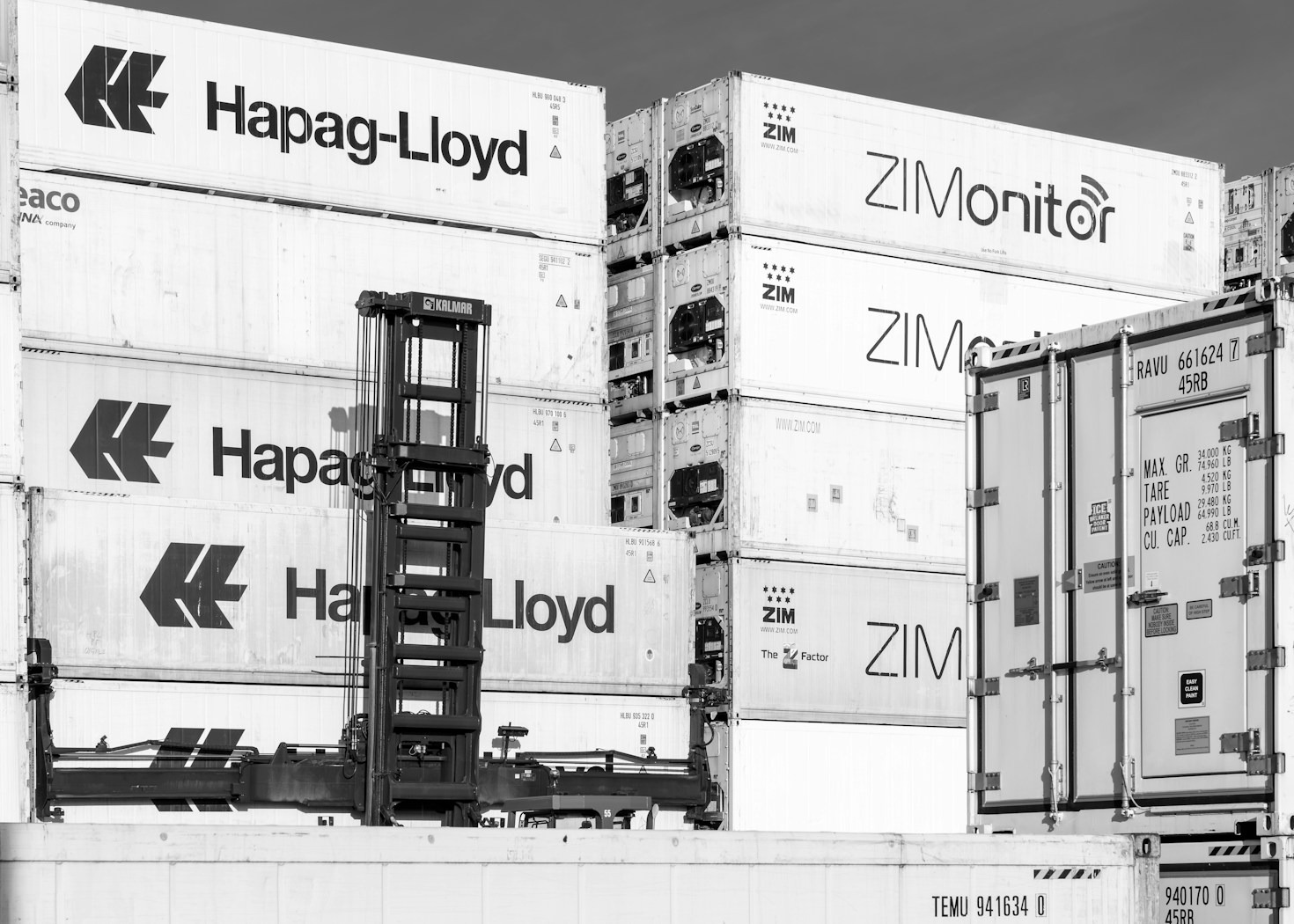

Modular edge data centers use prefabricated, self-contained units that integrate power, cooling, and IT infrastructure into standardized components. Unlike traditional data centers built from the ground up with custom specifications, these modules arrive at deployment sites as complete, tested systems ready for immediate integration. Each module functions as a micro data center, housing servers, networking equipment, uninterruptible power supplies, and cooling systems within a single enclosure that can range from the size of a shipping container to a small room.

This design philosophy addresses several key challenges inherent to edge computing. Rapid deployment timelines become achievable when construction happens in controlled factory environments rather than on-site. Space constraints in urban environments, retail locations, or cellular tower sites are more easily accommodated with compact, efficient modules. The need for consistent performance across geographically dispersed locations is met through standardization—every module delivers predictable capacity and reliability regardless of where it’s deployed. A facility that might take 18 months to build traditionally can now be operational in weeks, dramatically accelerating time-to-market for new services and applications.

The scalability advantages are equally compelling. Organizations can start small and expand capacity incrementally as demand grows, avoiding the capital risk of overbuilding. This pay-as-you-grow model aligns infrastructure investment directly with business needs—particularly valuable in edge computing where demand patterns can be unpredictable. A retail chain, for example, might deploy basic modules initially and add compute capacity during peak shopping seasons or as customer traffic grows. Telecommunications providers can position modules near 5G towers and scale them based on actual network usage rather than projected estimates.

Modular designs also improve operational efficiency across multiple dimensions. Standardized components simplify maintenance procedures, reduce spare parts inventory requirements, and enable remote management capabilities across multiple sites through centralized monitoring platforms. When issues arise, entire modules can be swapped out with minimal downtime—a crucial advantage for edge locations that may lack on-site technical staff or where prolonged outages would significantly impact business operations. The factory-assembled nature of modules also means higher build quality and more rigorous pre-deployment testing compared to field-assembled systems.

Energy efficiency represents another significant benefit. Modern modular designs incorporate advanced cooling technologies like liquid cooling, hot aisle containment, and intelligent airflow management optimized for smaller footprints. Power distribution is streamlined with integrated UPS systems and power management software that can dynamically allocate resources based on workload demands. Many modules now include options for renewable energy integration, allowing edge sites to reduce their carbon footprint while lowering operational costs.

The economic model shifts favorably as well. Modular infrastructure can be treated as operational expenditure rather than capital expenditure in many financial structures. Leasing options, vendor-managed services, and infrastructure-as-a-service models become more viable when dealing with standardized, portable units. This financial flexibility appeals particularly to organizations testing new markets or deploying edge computing as part of digital transformation initiatives where business cases are still evolving.

As 5G networks expand and IoT deployments accelerate, the edge computing landscape will only grow more complex. Smart cities will require distributed computing nodes throughout urban infrastructure. Manufacturing facilities will deploy edge resources to support real-time analytics and automation. Healthcare providers will need low-latency processing for telemedicine and remote patient monitoring. Modular design provides the architectural foundation to meet this challenge with agility, efficiency, and scale.

Wrapping Up with Key Insights

Modular design has emerged as the cornerstone of successful edge data center deployment strategies. The combination of rapid deployment, incremental scalability, operational simplification, and financial flexibility makes modular approaches ideally suited for the distributed, dynamic nature of edge computing. Organizations embracing this architecture gain the ability to respond quickly to market opportunities, manage infrastructure costs more effectively, and maintain consistent service quality across diverse locations. As edge computing continues its trajectory from emerging technology to essential infrastructure, modular design principles will play an increasingly central role in shaping how we build, deploy, and manage the distributed computing resources powering tomorrow’s digital experiences. The question for most organizations is no longer whether to adopt modular edge infrastructure, but how quickly they can implement it to maintain competitive advantage.

-

Data center construction is where meticulous planning meets unforgiving reality. Whether you’re building your first facility or your fiftieth, the difference between success and costly setbacks often comes down to lessons learned the hard way—under raised floors, during emergency outages, and through projects that went sideways despite the best intentions. These twelve tips distill practical wisdom from years spent in the trenches, offering insights that go beyond textbook theory to address the real-world challenges that separate exceptional data centers from merely functional ones.

The truth about data center construction is humbling: you’re building critical infrastructure that must work flawlessly on day one and adapt gracefully through technological revolutions you cannot predict.

1. Plan for Scalability from Day One Design your infrastructure with modular growth in mind. Build in phases but plan the entire footprint upfront—including power feeds, cooling loops, and structural capacity. This prevents costly retrofits and allows you to expand without disrupting operations.

2. Prioritize Power Density Mapping Early Don’t wait until equipment arrival to plan power distribution. Map out rack-level power density requirements during design phase. This prevents over-provisioning in some areas while creating bottlenecks in others, optimizing both capital and operational expenditure.

3. Implement Prefabrication Wherever Possible Leverage factory-built components for electrical rooms, cooling systems, and even raised floor sections. Prefabrication reduces on-site labor by 30-40%, improves quality control, and significantly compresses construction schedules.

4. Build Commissioning into Your Timeline Too many projects treat commissioning as an afterthought. Integrate testing protocols throughout construction—not just at the end. Progressive commissioning catches issues early when they’re cheaper to fix and ensures systems work together as intended.

5. Design for Operational Access Your construction team isn’t the only one who needs to navigate the facility. Plan maintenance corridors, cable tray accessibility, and equipment clearances with long-term operations in mind. A facility that’s difficult to maintain becomes exponentially more expensive to operate.

6. Establish Clear Cable Management Standards Poor cable management causes 60% of network downtime incidents. Define and enforce labeling conventions, routing standards, and documentation requirements before the first cable is pulled. This discipline pays dividends for decades.

7. Don’t Underestimate Site Logistics Coordinate delivery schedules meticulously. Large transformers, chillers, and generators require crane access and specific sequencing. A single delivery conflict can cascade into weeks of delays. Create a detailed logistics plan and update it religiously.

8. Invest in Redundancy Testing Simply installing N+1 or 2N systems isn’t enough. Test every failover scenario under load before going live. Many “redundant” systems have single points of failure that only reveal themselves during actual outages—when it’s too late.

9. Build Flexibility into Cooling Infrastructure IT loads and efficiency standards evolve constantly. Design cooling systems that can adapt to different strategies—hot aisle containment, liquid cooling, rear-door heat exchangers. The extra upfront investment in flexibility prevents obsolescence.

10. Document Everything in Real-Time As-built documentation created months after construction is invariably inaccurate. Capture photos, measurements, and configuration details as work progresses. This documentation becomes invaluable during troubleshooting and future expansions.

11. Engage Operators During Design The people who will run the facility daily have insights designers miss. Involve operations staff early to review layouts, access points, and monitoring strategies. Their practical experience prevents design flaws that look good on paper but fail in reality.

12. Plan for Decommissioning from the Start Data centers have lifecycles. Design systems that can be upgraded or replaced without massive disruption. Modular components, accessible cable pathways, and well-documented interdependencies make future changes manageable rather than nightmarish.

The Bottom Line…

Efficient data center construction isn’t about cutting corners—it’s about intelligent planning, disciplined execution, and designing for the entire lifecycle rather than just ribbon-cutting day. The facilities that perform best twenty years after opening are those where construction teams thought beyond the immediate build and considered every phase of operation, maintenance, and evolution. Your construction approach today determines whether you’ll have a strategic asset or an operational burden tomorrow.

-

The artificial intelligence revolution isn’t just changing how we process data—it’s fundamentally reshaping the physical infrastructure that houses our computing power. Traditional data centers, designed for general-purpose workloads and conventional server architectures, find themselves increasingly mismatched to the demands of AI and machine learning operations. As organizations race to deploy AI capabilities, the question is no longer whether to customize data centers for AI, but how quickly and effectively they can make the transformation.

Customizing data centers for AI means fundamentally reimagining power delivery, cooling infrastructure, and network architecture to handle extreme densities and unique workload patterns, while simultaneously building in the flexibility to adapt as rapidly as AI technology itself evolves—transforming general-purpose facilities into specialized engines that balance today’s demanding requirements with tomorrow’s unpredictable innovations.

The Power Density Challenge

AI workloads have shattered conventional assumptions about power consumption. Where traditional servers might draw 5-8 kW per rack, AI-optimized configurations with high-density GPU clusters routinely demand 30-50 kW per rack, with cutting-edge deployments pushing toward 100 kW and beyond. This isn’t a incremental change—it’s a fundamental shift that renders traditional power distribution strategies obsolete.

Customizing for AI begins with power infrastructure designed from the ground up for these extreme densities. This means oversized electrical feeds, strategically positioned power distribution units, and busway systems that can deliver massive amperage directly to equipment rows. Floor space becomes secondary to power availability—an AI data center might have half the rack count of a traditional facility but consume twice the power.

Cooling: The Make-or-Break Factor

Traditional air cooling systems hit their limits well before reaching AI-level power densities. While creative approaches like hot aisle containment and optimized airflow can push air cooling to perhaps 20-25 kW per rack, serious AI deployments demand liquid cooling solutions. The physics are inescapable: water has roughly 3,500 times the heat capacity of air, making it the only practical medium for removing the thermal loads AI generates.

Customization for AI cooling takes multiple forms. Direct-to-chip liquid cooling delivers coolant directly to processors and GPUs, removing heat at the source with remarkable efficiency. Rear-door heat exchangers bolt onto rack backs, condensing heat extraction into compact form factors. Immersion cooling submerges entire servers in dielectric fluid, achieving the highest density cooling available while dramatically reducing fan energy. Each approach requires different facility infrastructure—from coolant distribution networks to heat rejection systems sized for concentrated thermal loads.

The strategic decision isn’t just which cooling technology to adopt, but how to implement it without orphaning existing infrastructure. Hybrid approaches that combine air cooling for traditional workloads with liquid cooling for AI clusters offer flexibility during transition periods, though they add complexity to facility management.

Network Architecture Reimagined

AI workloads communicate differently than traditional applications. Model training involves massive parameter synchronization across hundreds or thousands of GPUs, creating east-west traffic patterns that dwarf typical north-south data flows. Inference workloads demand ultra-low latency between processing stages. These requirements push network architecture in new directions.

Customizing for AI means deploying high-bandwidth, low-latency fabrics optimized for GPU-to-GPU communication. InfiniBand and proprietary interconnects like NVIDIA’s NVLink provide the multi-terabit throughput AI clusters demand. Network topology shifts from hierarchical designs toward spine-and-leaf or even flat architectures that minimize hop counts and latency variability. Packet loss that might be acceptable for web traffic becomes catastrophic when it disrupts tightly synchronized training operations.

Space Planning and Layout Optimization

AI infrastructure clusters differently than traditional workloads. Training clusters benefit from physical proximity to minimize interconnect latency, leading to dense pod architectures where hundreds of GPUs occupy contiguous floor space. This contradicts traditional data center layouts optimized for distributed, loosely coupled systems.

Customized AI facilities embrace pod-based designs where power, cooling, and networking are provisioned in concentrated zones supporting specific AI workload types. Rather than uniform rows of identical racks stretching across the floor, AI data centers might feature distinct neighborhoods—training pods with maximum density and interconnect bandwidth, inference zones optimized for low latency and high throughput, and development areas with more flexible configurations.

Storage Infrastructure Evolution

AI’s hunger for data reshapes storage requirements dramatically. Training modern large language models involves processing trillions of tokens, demanding storage systems that can feed data to GPUs at sustained multi-terabyte-per-second rates. Traditional storage arrays, designed for transactional workloads with random access patterns, struggle with AI’s sequential, high-throughput demands.

Customization means deploying parallel file systems like Lustre or GPFS that can aggregate bandwidth across dozens of storage nodes. NVMe-over-Fabric technologies eliminate protocol conversion overhead, delivering near-native SSD performance across the network. Storage tiering becomes crucial—fast NVMe for active datasets, high-capacity disk for model checkpoints and archives, with automated data movement between tiers based on training schedules and access patterns.

Reliability and Redundancy Reconsidered

Traditional data center design obsesses over eliminating single points of failure, building N+1 or 2N redundancy into every system. AI workloads challenge these assumptions. Large-scale training jobs include checkpoint mechanisms that save progress periodically—if hardware fails, you restart from the last checkpoint rather than losing everything. This fault tolerance at the application layer reduces the need for infrastructure-level redundancy.

Customizing for AI might mean accepting lower infrastructure redundancy in exchange for higher performance or density. Training clusters might run on N+0 power configurations, reinvesting the saved capital into additional compute capacity. The trade-off makes sense when application design already handles failures gracefully and when the cost of redundant infrastructure exceeds the business impact of occasional interruptions.

Future-Proofing for Accelerating Change

AI technology evolves at breathtaking pace. Today’s state-of-the-art GPU will be obsolete in two years. Inference techniques that seem cutting-edge now will be replaced by more efficient approaches. Customizing for AI means designing for continuous evolution rather than static optimization.

This requires infrastructure flexibility that traditional data centers rarely needed. Modular power distribution that can be reconfigured as density requirements change. Cooling systems with headroom for next-generation thermal loads. Network fabrics that can accommodate emerging interconnect standards. The most successful AI data centers aren’t optimized for today’s workloads—they’re designed to adapt to tomorrow’s unpredictable demands.

The Human Element

Perhaps the most overlooked aspect of AI data center customization is operational expertise. Managing liquid cooling systems requires different skills than air-cooled environments. Troubleshooting high-speed interconnects demands specialized knowledge. Optimizing GPU utilization involves understanding both infrastructure and AI frameworks.

Organizations customizing for AI must invest in training and recruitment, building teams that bridge traditional data center operations and AI/ML engineering. The facilities that excel aren’t just those with the best hardware—they’re those where operators understand AI workload characteristics well enough to optimize infrastructure in real-time.

Wrapping Up with Strategic Perspective

Customizing data centers for AI represents one of the most significant infrastructure challenges and opportunities of our era. The organizations that succeed won’t be those that simply purchase the latest GPUs and cram them into existing facilities—they’ll be those that holistically reimagine power, cooling, networking, and operations around AI’s unique demands.

The investment is substantial, but so is the potential return. AI capabilities increasingly define competitive advantage across industries. The data centers being customized for AI today will power the innovations that shape the next decade—from scientific breakthroughs enabled by massive simulations to personalized experiences delivered through sophisticated inference at scale.

The question isn’t whether your data center strategy should account for AI—it’s how quickly you can make the transition while maintaining operational stability and financial discipline. Start with pilot deployments that teach your organization what AI really demands. Build relationships with vendors pushing the boundaries of power delivery and cooling technology. Invest in the expertise needed to operate these sophisticated environments. And above all, design for flexibility, because the only certainty in AI infrastructure is that requirements will continue evolving faster than traditional planning cycles can accommodate.

The data centers being built and customized today will determine who leads and who follows in the AI-driven economy. Choose your customization strategy wisely.

-

Edge computing is redefining where and how we deploy IT infrastructure. As applications demand millisecond latencies, IoT devices proliferate exponentially, and 5G/6G networks push bandwidth to the network’s periphery, the traditional model of centralized data centers becomes increasingly inadequate. Edge infrastructure brings computing power closer to end users and data sources—into retail stores, manufacturing floors, telecommunications hubs, smart city nodes, and remote locations that have never hosted IT equipment before.

Edge infrastructure is compelling because it acknowledges a fundamental truth: the future of computing isn’t about building bigger centralized facilities, but about intelligently distributing capability to the many of endpoints where data originates and actions occur—meeting the world where it actually operates rather than forcing it to come to the core.

This distributed paradigm creates design challenges fundamentally different from those of traditional data centers: extreme space constraints, hostile environmental conditions, limited on-site expertise, and the need to deploy and manage thousands of sites efficiently. The innovative solutions emerging to address these challenges aren’t simply miniaturized versions of conventional approaches—they represent fundamentally new thinking about how infrastructure should be designed, deployed, and operated when distribution and autonomy become primary requirements.

Micro Modular Designs for Constrained Spaces

Traditional rack-based infrastructure assumes generous floor space and standard ceiling heights. Edge deployments often have neither. Innovative solutions embrace micro modular designs—complete computing environments compressed into cabinets, wall-mounted enclosures, or under-desk units measuring cubic feet rather than square meters. These micro modules integrate servers, networking, storage, power conditioning, cooling, and monitoring into incredibly compact form factors, often delivering 10-20 kW of compute capacity while fitting locations where traditional racks are impossible.

Integrated Cooling for Diverse Environments

Edge locations lack controlled environments data centers take for granted. Equipment must operate in retail stockrooms, outdoor telecom shelters, factory floors with temperature swings and contaminants, or urban micro-pods exposed to weather extremes. Self-contained cooling systems using closed-loop designs protect IT equipment from environmental factors while managing thermal loads efficiently. Liquid cooling adapted to edge scales enables higher densities in smaller footprints, with heat rejection strategies that leverage ambient air when possible but switch to refrigeration when conditions demand.

Zero-Touch Deployment and Remote Management

Traditional deployment assumes skilled technicians performing installation and commissioning. Edge deployments occur at thousands of sites where such expertise is unavailable or prohibitively expensive. Equipment arrives pre-configured, automatically discovers network connectivity, and self-provisions into management systems. Remote monitoring becomes foundational—every edge site must be fully operable from centralized NOCs. Predictive maintenance using AI analyzes telemetry to identify issues before failures occur, with automated remediation handling routine problems without human intervention.

Resilience Through Simplicity and Security

Traditional reliability relies on redundant complex systems—N+1 cooling, 2N power, redundant network paths. Edge deployments achieve resilience through simplicity rather than redundancy: robust component selection withstands environmental stresses, application-level resilience tolerates individual site failures, and rapid replacement with hot-swappable modules minimizes downtime. Physical security receives equal attention—edge sites often exist in locations with limited protection, requiring tamper-evident enclosures, intrusion detection, secure boot processes, encryption for data at rest and in transit, and zero-trust network architectures that assume breach and limit lateral movement.

Power Efficiency and Renewable Integration

Edge locations face power constraints—limited electrical service, expensive utility rates, or battery-dependent installations. Advanced power management dynamically adjusts consumption based on workload and available capacity. Low-power processors optimized for edge workloads deliver necessary performance while consuming a fraction of traditional server power. Renewable energy integration—solar, wind, fuel cells—reduces grid dependence where utility power is expensive or unreliable, with automated power management putting idle resources into low-power states to minimize environmental impact across thousands of distributed sites.

Standardized Building Blocks at Scale

Managing thousands of unique edge deployments creates operational impossibility. Aggressive standardization defines limited sets of reference architectures that address common edge use cases, with each becoming a tested, validated building block deployed repeatedly. Standardization extends beyond hardware to include network configurations, monitoring parameters, security policies, and operational procedures. When everything is standard, deployment becomes repeatable, troubleshooting becomes systematic, and procurement achieves economies of scale—constraining variety so operational complexity remains manageable.

Adaptive Infrastructure for Evolving Requirements

Edge requirements evolve as applications, technologies, and business models change. Modular architectures allow component refresh without wholesale replacement. Flexible power and cooling accommodate density increases. Software-defined capabilities enable functional changes through configuration rather than hardware swaps. Designs anticipate technology transitions— 5G to 6G, AI inference evolution, new application types—by providing headroom and flexibility, making adaptability more valuable than current-state optimization since infrastructure deployed today must serve requirements that don’t yet exist.

Orchestration Across Distributed Resources

Edge infrastructure isn’t isolated—it’s part of distributed computing fabric spanning edge sites, regional aggregation points, and cloud resources. Orchestration platforms manage workload placement across this continuum, balancing latency, cost, data sovereignty, and resource availability. Automated workload migration responds to changing conditions—network congestion, site failures, demand fluctuations. Unified visibility provides operational perspective across distributed infrastructure, with innovative financial models like operational expenditure approaches, shared infrastructure facilities, and automated management reducing costs to levels sustainable for distributed operations.

The Edge Design Philosophy

These innovative solutions share common philosophy: edge infrastructure must embrace distribution as fundamental characteristic rather than problem to overcome. Success comes from designing for autonomy, standardization, simplicity, and adaptability—fundamentally different priorities than traditional data center design which assumes centralization, customization, complexity, and stability.

Edge infrastructure represents one of the most significant shifts in how we architect and deploy computing resources. The innovations emerging aren’t incremental improvements—they’re fundamentally new design paradigms acknowledging that distributing infrastructure to thousands of locations creates challenges traditional thinking cannot solve. The organizations succeeding at edge deployment are those willing to abandon data center conventions and embrace design principles aligned with edge realities: constrained spaces, hostile environments, limited expertise, and massive scale requiring operational automation.

The edge infrastructure being deployed today will define computing capabilities for the next decade. These innovative solutions provide the design insights enabling that deployment to succeed.

-

Building a scalable modular data center requires a fundamentally different mindset than traditional construction. The goal isn’t just to create infrastructure that works today—it’s to establish a foundation that can evolve gracefully through years of technological change, business growth, and shifting requirements.

In an era where technological change outpaces traditional planning cycles and business agility determines survival, the modular approach isn’t just important—it’s the only infrastructure strategy that aligns deployment speed, financial flexibility, and operational adaptability with the pace that modern organizations actually operate.

Scalability in modular design means more than simply adding capacity; it means creating systems where expansion is anticipated, planned for, and executed without disrupting existing operations or requiring wholesale redesign. The organizations that succeed are those that think systematically about how today’s decisions enable or constrain tomorrow’s options. These key considerations provide a framework for building modular infrastructure that grows as intelligently as the businesses it serves.

1. Design for Incremental Growth from Day One

Scalability begins with initial design decisions that accommodate future expansion. This means planning the complete build-out even if you’re only deploying a fraction initially. Consider power infrastructure—install electrical feeds and distribution systems sized for ultimate capacity, even if early modules use only a portion. Plan cooling systems with expansion loops and capacity headroom. Establish network backbone architecture that won’t require replacement as you add modules. The incremental approach fails when early decisions create bottlenecks that force expensive retrofits later. Successful scalable design anticipates the endpoint and works backward to ensure each phase naturally enables the next.

2. Standardize Module Specifications Rigorously

Scalability depends on consistency. Establish strict standards for module specifications—power capacity, cooling capacity, rack configurations, network connectivity, monitoring interfaces. When every module adheres to these standards, scaling becomes straightforward: order another unit that integrates seamlessly. Deviation from standards creates operational complexity that compounds with each addition. Document everything: power distribution schemes, cooling configurations, network topology, cable management practices. Standardization isn’t about limiting flexibility—it’s about creating predictable building blocks that can be combined reliably regardless of deployment timing or location.

3. Plan Power Infrastructure with Massive Headroom

Power availability ultimately limits scalability more than any other factor. Utility connections, transformers, generators, and distribution systems represent long-lead-time infrastructure that’s expensive to upgrade. Plan power capacity for 150-200% of anticipated peak demand. This seems excessive until you consider AI workloads, density increases, and business growth trajectories. Include provisions for additional utility feeds even if not immediately connected. Design distribution systems—busways, panel boards, UPS systems—that can expand without replacing existing infrastructure. The cost of oversizing power infrastructure upfront is trivial compared to the expense and disruption of upgrading under operational pressure.

4. Implement Modular Cooling Architecture

Cooling systems must scale in lockstep with compute density. Design cooling infrastructure modularly—whether air handling units, chillers, or liquid cooling distribution—so capacity additions integrate without disrupting existing systems. Plan for future liquid cooling even if starting with air—install piping infrastructure, plan equipment space, establish heat rejection capacity that accommodates both. Include monitoring systems that track cooling capacity and efficiency at granular levels, enabling proactive expansion before thermal constraints limit deployment. Consider cooling distribution paths that allow new modules to tap into existing infrastructure without extensive rework.

5. Build Network Fabric for Tomorrow’s Bandwidth

Network infrastructure is often underestimated in scalability planning. Deploy fiber and copper pathways generously—it’s nearly impossible to add conduit capacity later. Install spine-switch infrastructure sized for full build-out, even if initially underutilized. Plan IP addressing schemes with massive address space to avoid renumbering. Implement network segmentation that allows new modules to integrate without redesigning existing architecture. Consider future requirements for high-bandwidth GPU interconnects, storage networks, and management traffic. Network limitations that emerge during scaling initiatives cause disproportionate disruption and expense.

6. Plan for Technology Refresh and Lifecycle Management

Scalable infrastructure acknowledges that modules have lifecycles. Plan from the beginning how modules will be refreshed, upgraded, or decommissioned. Design systems where individual modules can be taken offline for maintenance or replacement without impacting others. Establish financial reserves and operational procedures for lifecycle management. Consider whether modules will be upgraded in place or replaced entirely. The most scalable designs make module refresh a routine operational activity rather than a disruptive special project.

Bringing It All Together

Building scalable modular data centers is fundamentally about making decisions today that preserve options tomorrow. Every choice—from power infrastructure sizing to lifecycle planning—either enables or constrains future growth. The organizations that excel at scalable modular deployment recognize this and approach initial design with the discipline to plan comprehensively, the wisdom to overprovision strategically, and the foresight to standardize rigorously.

Scalability isn’t achieved through any single consideration—it emerges from the interaction of thoughtful decisions across power, cooling, networking, and lifecycle management. When these elements align, the result is infrastructure that grows as gracefully at scale as it performed initially. When they don’t, organizations find themselves locked into expensive retrofits, operational complexity, and constraints that limit business agility precisely when growth demands flexibility.

The investment in building true scalability—the oversized power feeds, the comprehensive monitoring, the rigorous standardization—seems excessive during initial deployment. But this investment pays compounding returns with every expansion phase, every technology refresh, and every business opportunity that demands rapid infrastructure response. Build for scalability from day one, and growth becomes your competitive advantage rather than your operational challenge.

-

Containerized data centers—complete computing facilities housed within shipping container-sized units—represent one of the most practical innovations in IT infrastructure. What began as a solution for remote deployments and emergency scenarios has evolved into a mainstream approach that addresses challenges facing enterprises, telecommunications providers, and cloud operators alike. These self-contained units arrive on-site as fully integrated systems with power distribution, cooling, servers, networking, and monitoring already installed and tested.

The modular approach matters because it solves the fundamental dilemma that has plagued data center strategy for decades: how to build infrastructure substantial enough to support ambitious growth while remaining nimble enough to pivot when those ambitions change.

For organizations navigating the complexities of edge computing, rapid expansion, or resource-constrained environments, containerized data centers offer a compelling value proposition that extends far beyond their compact form factor. Understanding their benefits requires looking beyond the obvious portability to examine how they fundamentally change the economics, timelines, and operational realities of deploying computing infrastructure.

Deployment Speed That Transforms Business Timelines

The most immediate benefit is deployment speed that traditional construction cannot match. Conventional data center builds require 18-24 months from planning to operation. Containerized units compress this timeline to weeks or a few months at most, arriving as complete, tested systems requiring only connection to power and network. This speed advantage translates directly to business value: capturing market opportunities before competitors, responding to customer demands without delay, and generating revenue from infrastructure investments months or years earlier.

Capital Efficiency and Financial Flexibility

Containerized data centers reshape infrastructure economics favorably. Traditional builds demand massive upfront capital expenditure based on projected future needs. Containerized approaches enable incremental investment where each unit deploys as demand materializes, aligning capital outlay with actual requirements and revenue generation. Additionally, containerized units can be treated as operational expenses with leasing and infrastructure-as-a-service options. For organizations testing new markets or managing uncertain growth trajectories, this financial flexibility is transformative.

Operational Simplicity Through Standardization

Managing diverse facilities with custom configurations creates operational complexity that scales poorly. Containerized deployments enforce beneficial standardization—every unit shares identical power distribution, cooling systems, monitoring interfaces, and maintenance procedures. Staff trained on one container can work confidently on any other. Spare parts inventory consolidates around standard components. Remote management becomes practical when all units present consistent interfaces. This operational simplicity reduces cost and complexity while improving reliability.

Portability and Redeployability

Unlike traditional facilities permanently anchored to locations, containerized data centers offer genuine portability. When business priorities shift or better locations emerge, containers can relocate to where they deliver maximum value. This redeployability protects infrastructure investment against location-specific risks and enables temporary deployments—supporting construction projects, disaster recovery, special events, or seasonal demand spikes. Equipment refresh strategies benefit as newer containers replace older units that relocate to less demanding applications.

Perfect Fit for Edge Computing Requirements

Edge computing’s explosive growth demands distributed infrastructure at network edges rather than centralized facilities. Containerized data centers are purpose-built for this reality. Their compact form factor fits constrained urban locations, cell tower sites, retail environments, and industrial facilities where traditional construction is impractical. They operate reliably in diverse environmental conditions with minimal on-site support. For organizations building edge computing capabilities, containerized approaches offer the only practical path to deploy distributed infrastructure at the scale and speed business demands.

Predictable Performance and Reliability

Containerized units are manufactured and tested in controlled factory environments, ensuring consistent quality that field construction struggles to match. Every system undergoes rigorous testing before shipment—power distribution under load, cooling capacity verification, network validation. This factory-quality approach eliminates many startup issues that plague traditional builds. Organizations gain confidence that what they specified is what they receive, reducing the risk that infrastructure underperforms requirements.

Enhanced Sustainability Profile

Containerized data centers support sustainability goals through multiple mechanisms. Factory construction generates less waste than field assembly. Efficient designs optimize power usage effectiveness through integrated cooling and power management. Compact form factors reduce material consumption. Many modern containers incorporate renewable energy integration—solar panels, battery storage, demand-response programs. The ability to relocate units allows infrastructure to move toward renewable energy sources or locations with better efficiency characteristics.

Scalability Without Disruption

Traditional expansion often disrupts existing operations through construction activity and system modifications. Containerized approaches enable non-disruptive scaling—new units deploy alongside existing ones, connecting to shared infrastructure without touching operational systems. This modular growth path allows capacity additions during normal business hours without maintenance windows or service interruptions. The incremental nature provides flexibility to scale at whatever pace matches actual demand.

Disaster Recovery and Business Continuity

Containerized data centers excel in disaster recovery scenarios. Their rapid deployment means recovery sites can be operational in days rather than months following disasters. Portability allows strategic pre-positioning, with containers stored in secure locations ready to deploy quickly when needed. The standardization that simplifies operations also simplifies disaster recovery planning—runbooks and procedures apply consistently across all units.

Security and Access Control

Physical security benefits from containerized design. Each unit is a self-contained facility with controlled access points, integrated monitoring, and security systems. Environmental isolation—protection from dust, humidity, temperature extremes—comes standard, extending equipment life and improving reliability in challenging locations. Containers can incorporate additional security features—reinforced construction, advanced locking mechanisms, intrusion detection—tailored to specific deployment requirements.

Reduced Site Requirements and Flexibility

Traditional data centers demand extensive site preparation—foundations, building construction, utility connections, cooling towers. Containerized units minimize these requirements dramatically, often needing only a level pad and utility connections. This reduced site footprint enables deployment in locations where traditional construction is impossible—dense urban areas with limited space, temporary sites, leased facilities with restricted modification rights, or remote locations lacking construction infrastructure.

Proven in Demanding Environments

Containerized data centers have demonstrated reliability in some of the world’s most challenging environments—from arctic research stations to desert oil fields, from disaster zones to military forward operating bases. This proven track record in extreme conditions provides confidence for conventional deployments, demonstrating the technology has matured beyond experimental status to become proven infrastructure suitable for mission-critical applications.

Containerized : A Comprehensive Value Proposition

The benefits of containerized data centers compound rather than simply add. Deployment speed enables business agility. Capital efficiency reduces financial risk. Operational simplicity lowers ongoing costs. Portability protects investments. Each benefit reinforces others, creating value greater than the sum of individual advantages.

For modern business workloads—particularly those requiring distributed infrastructure, rapid deployment, operational simplicity, or deployment flexibility—containerized data centers have shifted from alternative option to logical default. For edge computing, rapid expansion, distributed operations, temporary deployments, or environments where traditional construction is impractical, containerized approaches offer compelling advantages that fundamentally improve how IT infrastructure supports business objectives.

-

Artificial intelligence is rewriting the rulebook for data center design. The computational demands of training large language models and running inference at scale have exposed the limitations of traditional infrastructure. What worked for web servers fails catastrophically when confronted with GPU clusters consuming 50 kilowatts per rack and generating overwhelming heat loads.

The question facing the industry isn’t whether these trends will become standard practice, but how quickly organizations can adopt them before their existing infrastructure becomes a competitive liability rather than a strategic asset.

The data centers being designed today aren’t just accommodating AI workloads—they’re being architected from the ground up around AI’s extreme requirements. These emerging design trends represent the industry’s response to infrastructure challenges that have become urgent imperatives as AI deployment accelerates.

1. Liquid Cooling Becomes Standard, Not Exception

The most visible trend is the wholesale shift from air to liquid cooling. Physics dictates this transition—air cooling hits maximum practical limits around 50 kW per rack, while AI configurations routinely demand 50-100+ kW. Direct-to-chip liquid cooling is becoming the baseline expectation rather than exotic technology. Rear-door heat exchangers provide a bridge solution for transitioning facilities. Immersion cooling is moving from research to production for the highest-density clusters. The infrastructure implications are profound: coolant distribution systems, heat rejection capacity, and facility design all pivot around liquid cooling as the primary thermal management strategy.

2. Power Density Planning Reaches Unprecedented Levels

AI workloads have shattered assumptions about electrical infrastructure. Designing for 5-10 kW per rack made sense for general compute; AI demands planning for 50 kW as baseline with headroom to 100+ kW. This forces fundamental rethinking—from utility feeds sized for massive loads, to busway systems delivering high amperage directly to equipment rows, to rack-level distribution handling what entire rows once consumed. The trend extends to sophisticated monitoring and management systems that track consumption at granular levels, enabling dynamic load balancing and predictive capacity planning.

3. Network Architecture Optimized for GPU-to-GPU Communication

Traditional networks prioritize north-south traffic between servers and users. AI training generates massive east-west traffic as thousands of GPUs synchronize during model training. The trend is toward ultra-high-bandwidth, ultra-low-latency fabrics specifically optimized for GPU clustering. InfiniBand deployments are becoming standard. Network topology is shifting from hierarchical designs to spine-and-leaf or flat architectures that minimize hop counts. The critical insight: in AI workloads, network latency directly impacts training speed, making network performance as important as compute capacity.

4. Pod-Based Architecture Replaces Uniform Layouts

Uniform rows of identical racks are giving way to pod-based designs where infrastructure is optimized for specific AI workload types. Training pods concentrate maximum power density, liquid cooling capacity, and high-bandwidth interconnects where GPUs work in tight coordination. Inference zones prioritize low latency for production workloads. Development areas maintain flexibility for changing requirements. This recognizes that AI workloads aren’t homogeneous—different phases have dramatically different infrastructure needs, requiring specialized neighborhoods within the facility.

5. Modular and Prefabricated Construction Accelerates

The urgency around AI deployment is driving aggressive adoption of modular construction. Prefabricated electrical rooms, cooling systems, and complete data halls arrive as tested, integrated units deployable in weeks rather than months. This trend addresses both speed and complexity—factory-built modules undergo rigorous testing where issues can be corrected efficiently. The approach enables rapid iteration as vendors refine designs based on real-world experience, incorporating improvements faster than traditional custom construction allows.

6. Vertical Integration of Compute, Storage, and Networking

AI workloads drive trends toward tightly integrated infrastructure where compute, storage, and networking are co-designed rather than separate layers. Training clusters need storage feeding data to GPUs at multi-terabyte-per-second rates, pushing storage physically closer to compute. NVMe-over-Fabric eliminates protocol overhead. Parallel file systems aggregate bandwidth across nodes. AI performance depends on the entire stack working in concert—optimizing any single component in isolation creates bottlenecks elsewhere.

7. Flexibility and Future-Proofing Take Priority

Given AI technology’s rapid evolution, designs emphasize adaptability over current optimization. This means electrical infrastructure with capacity for next-generation demands, cooling systems with thermal headroom, and network fabrics that can integrate emerging standards. The recognition: an AI data center optimized perfectly for today will be obsolete in 18 months, while one designed for flexibility maintains value through multiple technology generations.

8. Sustainability Integration from Day One

AI’s enormous power consumption is forcing sustainability from afterthought to core design principle. Trends include on-site renewable energy, waste heat recovery redirecting thermal energy to useful purposes, and power management scheduling training during renewable availability. Liquid cooling’s efficiency advantages are leveraged for sustainability—higher coolant temperatures reduce chiller loads and enable more free cooling hours. Carbon tracking and reporting capabilities are becoming foundational elements.

9. Operational Simplification Through Automation

Managing liquid cooling, extreme power densities, and heterogeneous AI workloads is driving aggressive automation. AI-ready designs incorporate comprehensive monitoring tracking thousands of sensors. Machine learning is applied to facility management itself—predicting maintenance needs, optimizing cooling efficiency, and identifying anomalies before failures. Remote management reduces dependence on on-site expertise, critical given industry skills shortages.

10. Resilience Models Reconsidered

Traditional design obsessed over eliminating single points of failure through redundancy. AI workloads are driving trends toward application-level resilience that accepts infrastructure failures and manages them through checkpointing and job migration. This enables designs trading some infrastructure redundancy for increased performance—training clusters might run on N+0 power configurations. The trend reflects maturation of AI frameworks that handle hardware failures gracefully.

The Convergence Ahead

These trends are interconnected responses to AI’s infrastructure challenges. Liquid cooling enables higher power density. Pod architecture concentrates that density strategically. Network optimization ensures GPU collaboration. Modular construction accelerates deployment. Flexibility ensures relevance as technology evolves.

What emerges is a new paradigm where AI requirements drive decision-making from site selection through operations. The facilities being designed today look fundamentally different from those built five years ago—denser, more complex, more automated, and vastly more capable. For organizations planning AI infrastructure, these trends provide a roadmap. The data centers that will power tomorrow’s AI breakthroughs are being designed today.

-

The data center industry stands at an inflection point. Traditional construction methods that served us well for decades—custom designs, lengthy build cycles, massive upfront investments—are increasingly misaligned with the realities organizations face today. The acceleration of digital transformation, the explosion of edge computing, the relentless pace of technological change, and the mounting pressure for sustainability have collectively created an environment where speed, flexibility, and efficiency matter more than ever.

As computing power continues its inexorable journey from centralized facilities to the distributed edge of the network, modular data centers aren’t just participating in IT’s future—they’re defining the very infrastructure foundation upon which that future will be built.

Modular data centers aren’t emerging as the future because of a single breakthrough or trend—they’re rising to dominance because they address multiple simultaneous challenges that conventional approaches simply can’t solve. Understanding why modular infrastructure has shifted from alternative option to inevitable standard requires examining the converging forces reshaping how we think about IT infrastructure deployment.

1. Speed to Market is Critical In today’s digital economy, speed determines competitive advantage. Modular data centers deploy in weeks or months rather than the 18-24 months traditional builds require. When a telecommunications provider needs 5G capacity or an enterprise must support sudden geographic expansion, modular infrastructure delivers operational capability at the pace business actually moves—capturing opportunities before windows close.

2. Capital Efficiency Matches Modern Financial Realities Traditional data centers demand massive upfront investments based on projected future needs—a risky proposition when technology shifts unpredictably. Modular approaches enable pay-as-you-grow deployment where capacity additions align directly with actual demand. Organizations avoid the capital trap of overbuilding while maintaining flexibility to scale aggressively when opportunities arise.

3. Edge Computing Requires Distributed Architecture The explosive growth of IoT, autonomous systems, 5G networks, and latency-sensitive applications is pushing computing toward the network edge. These distributed deployments—at cell towers, retail locations, manufacturing facilities—demand compact, self-contained solutions that operate reliably with limited on-site support. Modular data centers deliver consistent performance regardless of location while simplifying management of hundreds or thousands of edge sites.

4. Standardization Solves Operational Complexity Managing disparate facilities with custom configurations creates operational nightmares that compound over time. Modular infrastructure enforces beneficial standardization—consistent power distribution, cooling strategies, monitoring systems, and maintenance procedures across deployments. This uniformity dramatically simplifies operations, enables centralized management, and minimizes specialized knowledge needed for each location. Solutions proven at one site transfer directly to others.

5. Factory Construction Quality Exceeds Field Assembly Building infrastructure in controlled factory environments rather than construction sites fundamentally improves quality. Factory assembly enables rigorous pre-deployment testing, consistent build quality unaffected by weather, and the ability to identify issues when they’re easiest to fix. Components arrive as tested, validated systems rather than parts requiring field integration, reducing commissioning time and delivering higher reliability from day one.

6. Flexibility Accommodates Technology Evolution The IT landscape evolves relentlessly—what’s cutting-edge today becomes obsolete within years. Modular infrastructure adapts to changing requirements without wholesale facility replacement. Individual modules can be upgraded, repurposed, or replaced as needs change. Organizations can deploy different configurations for different workload types—high-density GPU modules for AI, standard configurations for general compute—all within a coherent architectural framework.

7. Sustainability Demands Efficiency Environmental responsibility has shifted from optional to mandatory. Modern modular data centers incorporate advanced efficiency technologies—optimized cooling, intelligent power management, renewable energy integration—as standard features rather than expensive retrofits. Their compact design minimizes material usage and construction waste. For organizations pursuing aggressive sustainability goals, modular infrastructure provides a clear pathway to reduced environmental impact.

8. Risk Mitigation Through Incremental Deployment Traditional construction concentrates enormous risk into single, massive projects where cost overruns or design flaws can have catastrophic impact. Modular approaches distribute risk across smaller, incremental deployments. Organizations can pilot solutions, validate performance in real-world conditions, and refine approaches before scaling broadly. If business conditions change, the financial exposure of any single module deployment remains manageable.

9. Geographic Flexibility Opens New Markets Modular infrastructure deploys in locations where traditional construction is impractical—remote areas lacking construction resources, temporary sites with uncertain viability, or constrained urban locations where permits and logistics are prohibitive. This flexibility enables organizations to serve previously unreachable markets. The portable nature of some modular designs even allows relocation if business needs shift.

10. Vendor Ecosystem Maturity Reduces Risk The modular data center market has matured significantly, with established vendors offering proven solutions, comprehensive support, and clear upgrade paths. Organizations can select from multiple vendors with track records of successful deployments and reference architectures validated across diverse use cases. The availability of financing options, managed service models, and turnkey solutions further reduces barriers to adoption.

11. Hybrid Strategies Demand Infrastructure Agility Modern IT strategies increasingly embrace hybrid architectures combining on-premises infrastructure, multiple cloud providers, and edge computing. This diversity demands infrastructure that can deploy rapidly and adapt to shifting workload placement decisions. Modular data centers provide the agility these strategies require—enabling organizations to quickly establish presence in new regions, respond to data sovereignty requirements, or rebalance workloads as economics and performance dictate.

12. Skills Shortage Makes Simplicity Essential The data center industry faces a persistent shortage of experienced talent. As experienced professionals retire and demand accelerates, the gap between available expertise and operational requirements widens. Modular infrastructure addresses this through operational simplification—remote monitoring, automated management, standardized procedures—that allows smaller teams to manage larger deployments, reducing dependence on scarce expertise.

The Convergence of Inevitability

These factors reinforce each other, creating a compelling case that transcends any single advantage. Speed enables edge strategies. Standardization improves sustainability. Capital efficiency reduces risk. Flexibility accommodates evolution. Together, they represent a fundamental shift in how IT infrastructure gets deployed and managed.

The future isn’t about choosing between traditional and modular approaches—it’s about recognizing that organizational requirements increasingly align with modular strengths while exposing traditional limitations. As edge computing expands, as deployment speed becomes more critical, as financial discipline intensifies, and as sustainability requirements tighten, modular data centers transition from alternative option to logical default.

The data centers powering tomorrow’s digital infrastructure will be faster to deploy, more efficient to operate, more flexible in configuration, and more sustainable in impact than those we built yesterday. They’ll be modular not because it’s trendy, but because it’s the approach that best aligns with the realities organizations face.

-

After many years of toiling for blue-chip (and smaller) companies delivering infrastructure projects that range from sub-marine to in-orbit, and having navigated through the millennium bug and the access restrictions of the pandemic, we decided that our combined experiences from those hardships and the many hours spent under racks, crawling in fiber pits and staring at caesium lasers have given us a practical perspective and knowledge that we believe should be useful, even invaluable, to those already in and new to the data center ecosystem.

After some deliberation this company was born….

We are nanoDC…!

Why do we matter…?

Well…

Perhaps the most profound impact to the DC ecosystem is the advent of modular edge design. The impact lies not in its technical specifications, but in its power to democratize access to advanced computing infrastructure.

For decades, sophisticated data center capabilities remained the exclusive domain of large enterprises with deep pockets and specialized expertise. Modular design changes this equation fundamentally.

Small municipalities can now deploy edge computing to power smart city initiatives. Regional healthcare networks can offer the same low-latency telehealth services as major hospital systems. Local manufacturers can compete globally by leveraging edge AI and real-time analytics previously available only to industry giants.

This democratization represents more than economic efficiency—it’s about unlocking human potential at scale. When a small business in a rural area can access the same computing power as a Fortune 500 company, when a startup can deploy infrastructure in weeks rather than years, we create conditions for innovation that weren’t previously possible.

Modular edge computing isn’t just infrastructure; it’s an equalizer that allows the best ideas to win regardless of where they originate or who conceives them.

Modular Edge Data Centers is how we will achieve this;

… In Partnership;

… Together !